QuEra-led Study Points to Ultra-High-Rate Quantum Error Correction Moving Closer to Practical Hardware

Insider Brief

- Researchers demonstrated ultra-high-rate quantum error-correcting codes with encoding efficiency above 50% and logical error rates approaching 10⁻¹³, moving closer to practical fault-tolerant quantum computing.

- The work combines hardware co-design for neutral atom systems with a hierarchical decoding approach to reduce qubit overhead while maintaining strong error suppression under realistic noise conditions.

- The results apply to quantum memory rather than full computation, highlighting that further advances in decoding, operations, and system integration are required for complete fault-tolerant architectures.

A new study reports quantum error-correcting codes with encoding rates above 50% that can run efficiently on neutral-atom hardware while achieving extremely low logical error rates—an advance that could sharply reduce the overhead required for large-scale quantum computing.

Quantum error correction has long been seen as the central bottleneck in building useful quantum computers. Today’s leading approaches, such as surface codes, often require hundreds or even thousands of physical qubits to protect a single logical qubit. The new work, posted on arXiv by researchers from QuEra, Harvard and MIT, describes a different path with high-rate quantum codes packing more logical qubits into fewer physical qubits without sacrificing reliability.

The study finds that these “ultra-high-rate” codes can achieve logical error rates as low as roughly 10⁻¹³ per logical qubit per correction cycle under realistic noise assumptions, approaching what researchers refer to as the “teraquop” regime—a threshold associated with running large, fault-tolerant quantum algorithms.

Reducing the Overhead Problem

The research is focused on scale, one of the core issues in quantum computing. Quantum bits are fragile, and errors accumulate quickly. Error correction works by encoding one logical qubit into many physical qubits, but there is a tradeoff because this creates a steep resource cost. Surface-code approaches, for example, are projected to require hundreds to thousands of physical qubits per logical qubit.

The new approach builds on quantum low-density parity-check (qLDPC) codes, which distribute error correction across many qubits. These codes offer a path to higher efficiency because they allow many logical qubits to share the same pool of physical qubits.

However, previous implementations that performed well at realistic system sizes typically achieved encoding rates of around 10% or less, which limits their practical advantage. The new study builds on a recent mathematical construction using affine permutation matrices, which is a way of rearranging a set of items using a simple rule — multiply each position by a number, then add another number, and wrap around — so everything gets shuffled in a structured, reversible pattern.

The researchers indicated that encoding rates exceeding 50% can be achieved while maintaining strong error-correcting performance.

The team also demonstrated that these high rates are not just theoretical—they can be realized at system sizes of around 1,000 qubits, which aligns with current experimental capabilities.

Hardware Co-Design Becomes Central

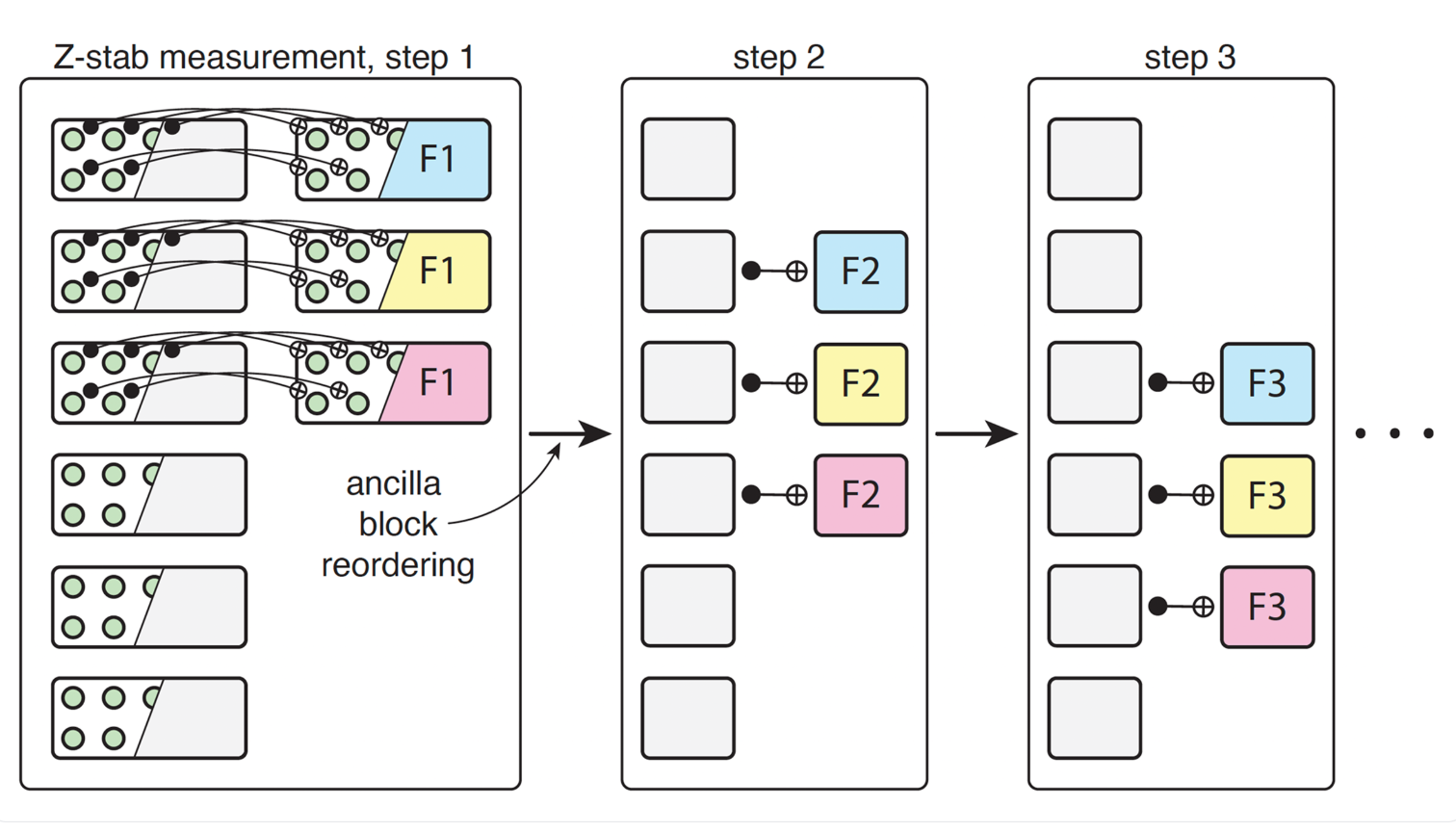

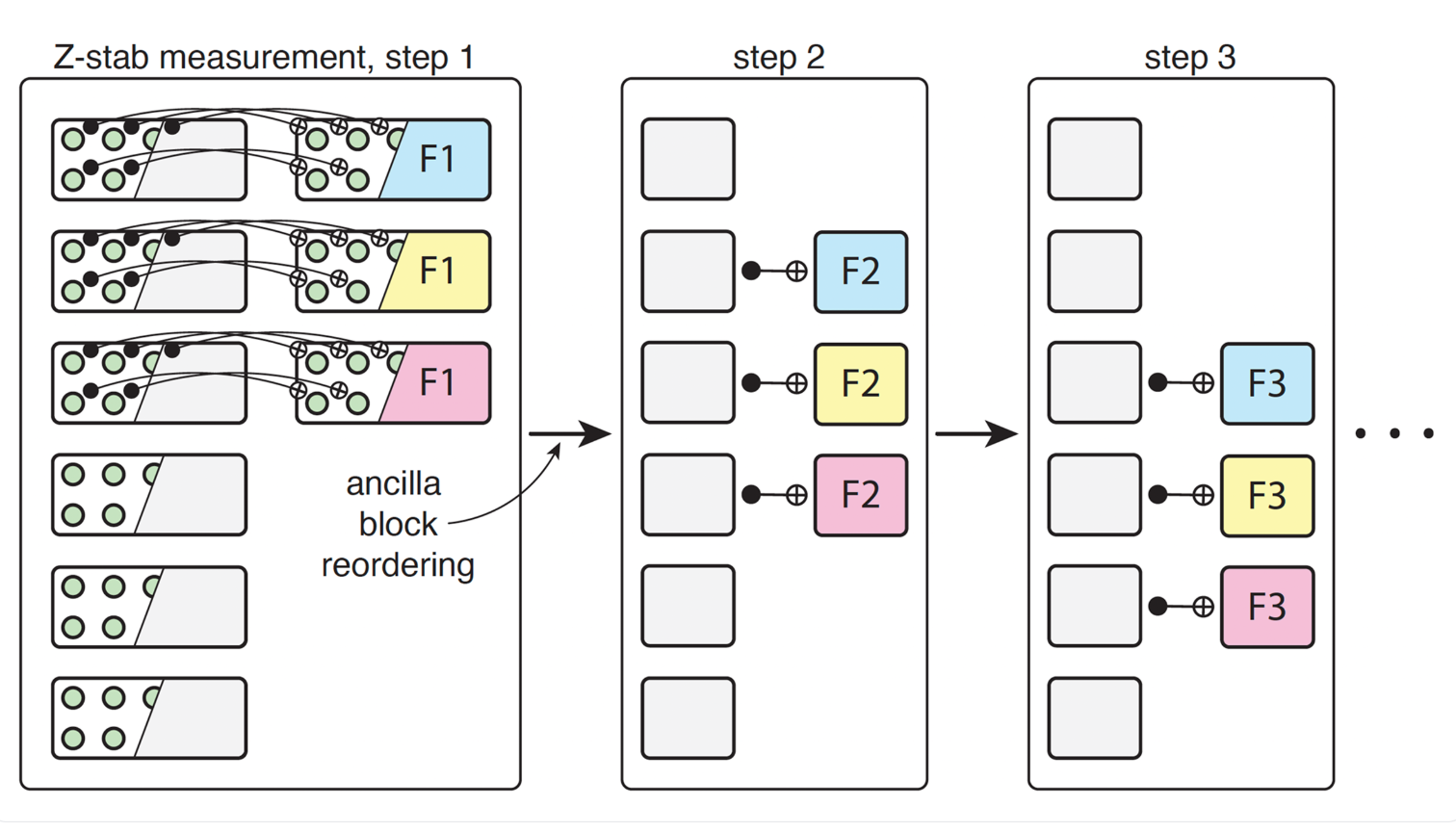

A defining feature of the work is its focus on hardware co-design. Instead of developing error-correcting codes in isolation, the researchers tailor them to match the capabilities of reconfigurable neutral atom arrays.

Neutral atom systems use lasers to trap and move atoms, allowing flexible connections between qubits. This makes them well-suited for implementing complex error-correction schemes. But the movement of atoms is constrained, typically limited to structured operations such as row and column shifts.

The study identifies mathematical conditions that ensure the required operations in the code can be implemented using these simple movements. Without these constraints, rearranging qubits during error correction could require many steps, increasing both time and error rates.

By co-designing the code with the hardware, the researchers reduce this complexity. In many cases, the required rearrangements can be completed in just a few steps, enabling faster and more reliable error correction.

To test the approach, the researchers simulate the codes under both simplified and detailed noise models. They focus on physical error rates around 0.1%, which are commonly targeted in experimental systems.

For a code using 1,152 physical qubits to encode 580 logical qubits, the study reports an average logical error rate of about 2.9 × 10⁻¹¹ per logical qubit per round. A larger code with 2,304 physical qubits and 1,156 logical qubits achieves an even lower rate of about 1.3 × 10⁻¹³.

These results stand out for combining two desirable properties — namely, high encoding efficiency and very low error rates. In practical terms, this means more computational capacity with fewer physical resources.

The simulations also show a rapid drop in logical error rates once the physical error rate falls below a certain threshold, a behavior known as the “waterfall” region. This effect is expected in high-rate codes but had not been demonstrated as clearly in realistic, finite-size systems.

A Hierarchical Decoding Strategy

Error correction depends not only on the code but also on the algorithm that determines how to fix errors based on measurement data, or “the decoder”.

The study introduces a three-tier decoding approach. A fast, approximate method handles most cases. If that fails, a more refined version is applied. Only a small fraction of difficult cases are passed to an exact, computationally intensive solver.

This layered strategy balances speed and accuracy. Most errors are corrected quickly, while complex cases receive additional processing. The result is a system that can achieve high accuracy without a prohibitive computational cost.

This is a key consideration for real-world systems, where decoding must keep pace with quantum operations.

The findings suggest a potential change in how quantum error correction is implemented. High-rate codes tailored to specific hardware platforms could reduce the number of physical qubits required for useful computations.

This has direct implications for large-scale applications such as factoring, materials simulation and optimization problems, which are often estimated to require millions of qubits under current assumptions. Improving encoding efficiency could significantly lower those requirements.

The work also emphasizes the importance of integrating hardware and software design. By aligning code structure with hardware capabilities, the study shows that theoretical improvements can translate into practical gains.

Limitations, Future Work

The study includes several simplifying assumptions. For example, it does not account for idling errors—noise that occurs when qubits are not actively used — based on the expectation that neutral atom systems have long coherence times.

In practice, additional noise sources such as atom loss, imperfect movement, and correlated errors may affect performance. These factors will need to be examined in future work.

The decoding approach, while effective in simulations, is not yet optimized for real-time operation in hardware. Further development will be needed to ensure it can run efficiently in experimental systems.

The study also focuses on quantum memory — storing information reliably — rather than full quantum computation.

The team writes in a company blog post on the work: “To be clear about scope: this paper is a quantum memory result. We show that logical information can be stored with extremely low error rates at high encoding efficiency, and further developments will be needed to establish all ingredients for full fault-tolerant computation. But memory is the foundation. If a quantum computer can’t hold information reliably, it can’t compute reliably either. The encoding efficiency demonstrated here directly determines how large that computer needs to be.”

Extending these techniques to support logical operations introduces additional challenges.

The researchers outline several paths forward. On the coding side, expanding the design space could reveal better trade-offs between encoding rate, error tolerance, and implementation cost. Combining this approach with other code families may yield further improvements.

On the decoding side, machine-learning methods could offer gains in both speed and accuracy. Early results suggest that neural decoders may be particularly effective at handling complex error patterns.

A key next step is integrating these codes into full fault-tolerant architectures, including methods for performing logical operations and managing resources such as magic states.

The broader takeaway is that high-rate quantum error correction is moving from theory toward practical implementation. By combining advances in mathematics, hardware design, and decoding algorithms, the study demonstrates that it is possible to reduce overhead while maintaining strong performance.

The research team included Chen Zhao, Casey Duckering and Hengyun Zhou of QuEra Computing; Andi Gu of Harvard University; and Nishad Maskara and Hengyun Zhou, who is also affiliated, of the Massachusetts Institute of Technology.

For a deeper, more technical dive, please review the paper on arXiv. It’s important to note that arXiv is a pre-print server, which allows researchers to receive quick feedback on their work. However, it is not — nor is this article, itself — official peer-review publications. Peer-review is an important step in the scientific process to verify results.