Quantum Researchers Find Faster Path to Practical Advantage, Challenge Assumptions About Architecture Design

Insider Brief

- Researchers from Duke University, the University of Texas at Austin, and Yale University have identified a new method for parallelizing quantum computations on neutral-atom hardware that could cut execution time by up to three-fold without requiring more physical qubits.

- The team found that so-called hybrid architectures — a popular approach that mixes two types of quantum memory — are suboptimal in both space and time, challenging a widely held assumption in early fault-tolerant quantum computing design.

- The study identifies architectures capable of running scientifically meaningful quantum advantage applications with as few as 11,495 atoms and a runtime of roughly 15 hours — a concrete near-term target the authors say is achievable.

A new study finds a way to make early fault-tolerant quantum computers run up to three times faster without adding more physical qubits, and concludes that a leading design strategy used by the field is slower and more costly than previously believed.

The research, published as an arXiv preprint by scientists from Duke University, the University of Texas at Austin, and Yale University, targets neutral-atom quantum computers — machines that trap and manipulate individual atoms using focused beams of light. These systems have emerged as a leading platform for early fault-tolerant demonstrations because they can reconfigure qubit connections on the fly, a flexibility that classical chip-based designs cannot easily match.

The study addresses the increasingly important practical quantum advantage — the point at which a quantum computer solves a problem beyond the reach of the world’s best classical machines. Several groups have published resource estimates pointing to that threshold. This paper appears to go further, offering what the researchers call the first end-to-end simulations of quantum advantage applications executed under realistic hardware constraints, down to the timing of individual atomic movements.

Fault-tolerant quantum computers do not run on raw qubits. They encode fragile physical qubits into larger logical qubits protected by quantum error-correcting codes, which essentially, spreads information across many physical particles so that individual errors can be detected and corrected without destroying the computation. The tradeoff is overhead in the form of more physical qubits, more operations, more time.

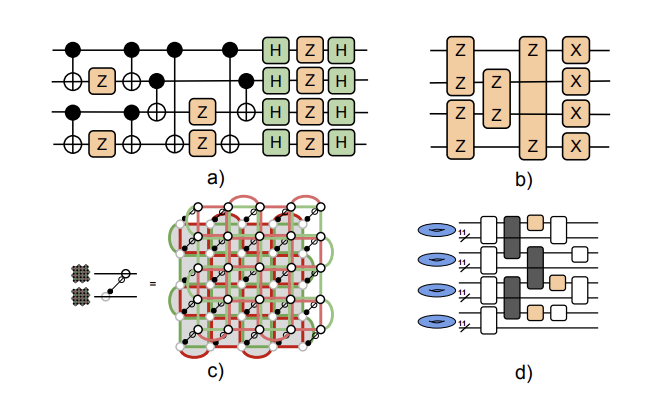

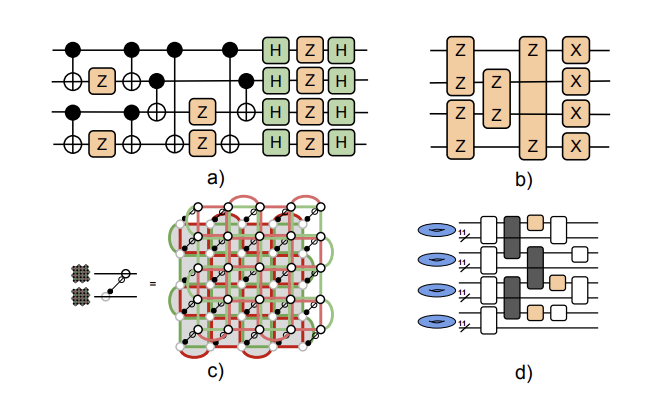

The most spatially efficient designs, known as extractor or code-surgery architectures, perform all computation on high-rate quantum low-density parity-check codes, or QLDPC codes, which pack many logical qubits into fewer physical ones than older designs. The catch is speed. These architectures execute a critical subroutine — a rotation gate approximation, or R(φ) synthesis — which approximates arbitrary rotation gates using a sequence of simpler operations, largely in serial. One gate finishes, then the next begins.

The researchers identified that serial bottleneck as the dominant source of slowdown. They also noticed that many of the logical qubit modules in the architecture are unused during synthesis. On most quantum hardware, those idle resources are simply wasted. On neutral-atom systems, they need not be.

A Teleportation Trick

The team’s solution is a gate-teleportation scheme that hijacks those idle modules to run multiple non-Clifford gate injections — the expensive, non-parallelizable operations at the heart of universal quantum computation — at the same time. Rather than moving information across space, quantum teleportation in this context refers to a technique that uses pre-shared quantum entanglement to transfer the effect of a gate from one qubit to another without directly applying it.

The scheme works in three stages. First, pairs of idle logical ancilla qubits are entangled via a two-qubit measurement, creating the shared resource needed for teleportation. Second, the actual gate — a T-state injection, which is the primitive operation used to build non-Clifford gates in fault-tolerant computing — is applied using a special magic-state resource prepared by a separate factory. Third, the entangled ancillas are disentangled and reset. The whole gadget takes three time steps of overhead but enables the parallel execution of several injections that would otherwise run one after another.

The speedup scales with the number of idle modules available. When the circuit involves more logical qubits and leaves more modules unused during any given synthesis step, the parallelization yields greater benefit. The researchers find the scheme begins to pay off once an application involves at least 10 modules, or about 100 logical qubits.

Across four quantum physics benchmarks, including simulations of the Heisenberg model, two variants of the transverse-field Ising model, and the Fermi-Hubbard model, the new scheme achieves up to roughly 3x speedup over the baseline extractor architecture at no additional qubit cost. For the Fermi-Hubbard model with 200 logical qubits, it even surpasses transversal architectures, which use a fundamentally different design based on the surface code, in total execution time.

Hybrid Designs Come Up Short

One of the paper’s focuses is on hybrid or load/store architectures, a design class that has attracted substantial interest as a potential compromise between the spatial efficiency of QLDPC codes and the computational flexibility of error-correcting codes that support transversal gates, which are operations that can be applied to logical qubits simply and directly.

In a hybrid architecture, logical qubits are stored in a dense QLDPC code but shuttled into a smaller region of a surface code for computation, then shuttled back. The appeal is to use cheap memory and compute in a region where gates are easy. The problem, the study finds, is that the loading and unloading — called thrashing — is itself expensive.

The team modeled three variants of hybrid compilation policy, varying how aggressively computation was delegated to memory and how many surface code compute blocks were reserved. In all cases and across a suite of industry-standard quantum circuit benchmarks, hybrid strategies failed to match either transversal or extractor architectures in the combination of space and time, a metric called spacetime volume. At small compute regions, thrashing overwhelms any benefit from the high-rate memory. At large compute regions, the spatial overhead balloons.

“At small K, hybrid architectures suffer from excessive thrashing operations, yielding time costs comparable to extractor architectures while having additional spatial overhead,” the researchers write. “As K increases, the relative improvement in time is not proportional to the increase in space.”

The result is important because hybrid architectures have been an active area of development, and several prior papers have proposed them as a practical path toward early fault-tolerant demonstrations. The study’s conclusion — that they are suboptimal on both dimensions simultaneously — suggests the field may need to reconsider that trajectory.

From Theory to Hardware

To move beyond abstract estimates, the researchers built explicit simulation models that account for the quirks of real neutral-atom hardware. Measurement in these systems — the step used to extract error syndromes and correct faults — runs roughly 1,000 times slower than gate operations. A gate might take a microsecond while a measurement takes 10 milliseconds. Nearly all of a fault-tolerant application’s wall time is therefore spent waiting for measurements.

The simulations also accounted for the nondeterminism of T-state factories, which are the components that produce the magic states needed for non-Clifford gates. These factories succeed probabilistically. The study used a discard rate of 80%, meaning 80% of factory attempts are discarded and must be retried. With a cultivation protocol from a companion paper, the team found that roughly 15 factories bring performance close to the ideal unlimited-supply scenario.

Low-level atom movement — shuttling atoms between positions using acousto-optic deflectors — was also modeled precisely. The researchers used simulated annealing to find efficient atom mappings and found that gate and shuttling times account for at most 1.4% of total execution time. Measurement dominates, a result that validates the study’s focus on reducing measurement steps rather than optimizing gates.

The final results identify concrete architectures: using the two-gross code — a QLDPC code that encodes 12 logical qubits into 288 physical ones — combined with the parallelized injection scheme and cultivation T-state factories, the team finds quantum advantage in the 2D long-range transverse-field Ising model is achievable with 11,495 atoms and roughly 15 hours of runtime, at a success probability above 94%. Success probability here means the chance the entire computation finishes without an uncorrected logical error.

The team offers a few limitations in the work. For example, the transversal architecture’s shuttling and gate costs were not fully modeled. Those simulations assume a best-case lower bound on time. A fully detailed transversal simulation might narrow some of the performance gaps. The paper also focuses on specific benchmark applications; different circuit structures might favor different architectural tradeoffs. And all projections rest on assumed hardware parameters that exceed what is currently demonstrated in the lab, including a physical error rate of 10⁻³ and a coherence time of 100 seconds.

The researchers suggest future work could refine low-level shuttling path optimization, explore the parallelization upper bound they derived but did not fully exploit, and extend the framework to other QLDPC code families. The paper also leaves open the question of whether fully addressable, transversal high-rate gate sets, which would change several of the comparisons, will become practical.

The research team included Sahil Khan, Yingjia Lin, Jude Alnas, Suhas Kurapati, Abhinav Anand, and Kenneth R. Brown of Duke University; Sayam Sethi and Jonathan M. Baker of the University of Texas at Austin; and Kaavya Sahay of Yale University.

For a deeper, more technical dive, please review the paper on arXiv. It’s important to note that arXiv is a pre-print server, which allows researchers to receive quick feedback on their work. However, it is not — nor is this article, itself — official peer-review publications. Peer-review is an important step in the scientific process to verify results.