Study Finds More Qubits Yield Better Financial Optimization Outcomes

Insider Brief

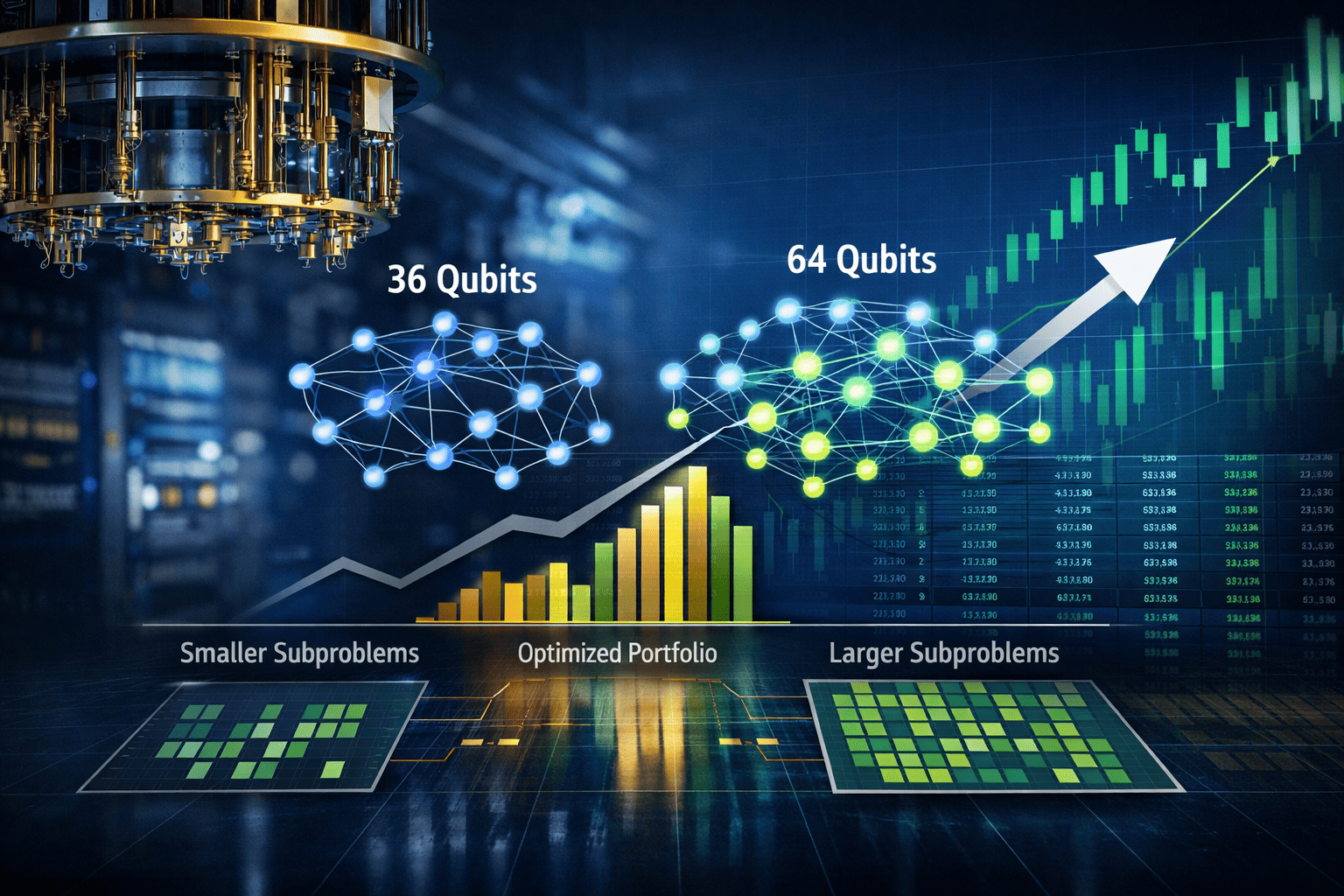

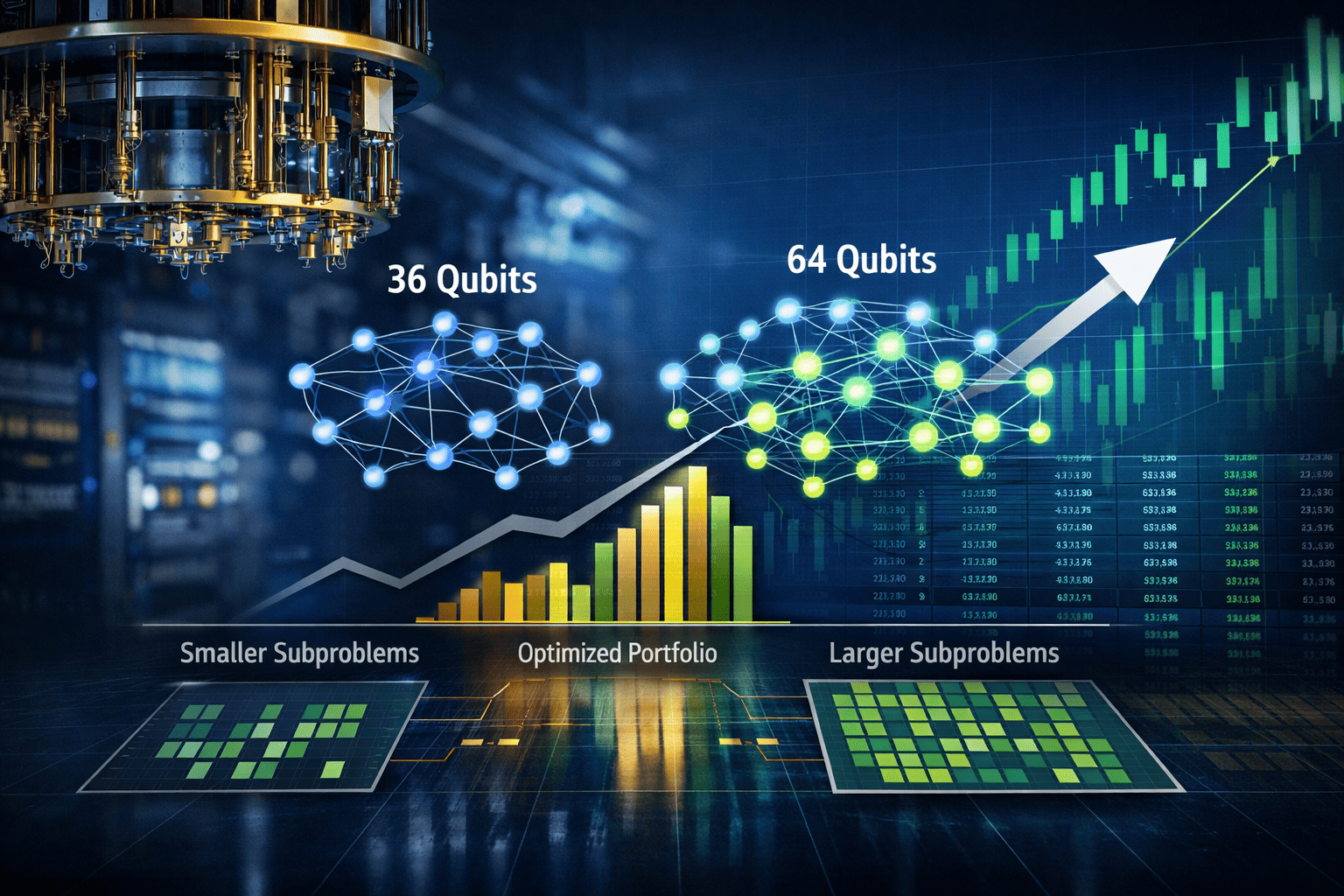

- Increasing quantum subproblem size from 36 to 64 qubits improved portfolio optimization results on a 250-asset S&P 500 dataset, showing a direct link between hardware scale and solution quality.

- The hybrid quantum-classical pipeline splits the full problem into smaller parts, solves them on trapped-ion quantum systems, and refines the results with classical optimization techniques.

- Results show quantum-generated starting points outperform random baselines and that improvements scale with qubit count without requiring changes to the underlying algorithm.

Quantum hardware is beginning to show measurable gains on real financial problems, with larger qubit systems delivering better portfolio outcomes in direct comparisons with classical methods.

A blog post, detailing results from a collaboration involving IonQ and Kipu Quantum, reports that increasing the size of executable quantum subproblems — from 36 to 64 qubits — consistently improved portfolio optimization results across a 250-asset universe drawn from the S&P 500. The findings, based on experiments run on deployed trapped-ion systems, suggest that near-term quantum devices can already contribute to complex financial optimization workflows, with performance scaling directly alongside hardware capacity.

The work, which was published in a study on the pre-print server arXiv, centers on selecting a fixed number of assets from a large pool while balancing risk and return, a longstanding issue in finance. Known as cardinality-constrained portfolio optimization, the problem becomes increasingly difficult as the number of assets grows. Classical solvers can approximate solutions, but exact optimization is computationally intractable at scale, forcing reliance on heuristics.

The researchers reformulated the problem into a quadratic unconstrained binary optimization model, or QUBO, a mathematical structure that can be mapped onto quantum hardware. In this framework, each asset is represented as a binary decision — include or exclude — while the objective function encodes both expected return and risk through asset correlations.

A single 250-variable QUBO cannot yet run on existing quantum machines. Instead, the team used a hybrid approach that divides the problem into smaller subproblems sized to match available qubit counts, solves those on quantum hardware, and recombines the results using classical post-processing.

Scaling With Qubit Count

The researchers indicate that one of the key findings is that more qubits enable better solutions.

When the subproblem size increased from 36 to 64 qubits, the resulting portfolios consistently moved closer to the global optimum calculated by the classical solver Gurobi. The improvement was not theoretical but observed directly in hardware runs, according to the post.

The reason lies in how the problem is broken up. Smaller subproblems require splitting the asset universe into more clusters, which breaks correlations between assets in different groups. Those lost correlations introduce approximation errors when the subproblems are recombined. Larger subproblems preserve more of the original correlation structure, reducing those errors.

In the reported experiments, the 36-qubit system produced 14 clusters, while the 64-qubit system reduced that to 11 clusters. Fewer clusters meant fewer severed correlations and, ultimately, higher-quality portfolios.

The workflow described in the post combines quantum and classical techniques across four stages.

First, the researchers apply a statistical cleaning step to the asset correlation matrix using random matrix theory. Financial data is inherently noisy, and raw correlations can contain spurious patterns that do not reflect real relationships. By filtering out the noise, the method isolates meaningful structure before optimization begins.

Second, the cleaned data is partitioned into clusters that fit within the available qubit budget. The partitioning process ensures that each asset is assigned to exactly one cluster, avoiding data loss while respecting hardware constraints.

Third, each cluster is converted into an Ising model — which is a mathematical representation used by quantum systems — and solved using a quantum algorithm known as Bias-Field Digitized Counterdiabatic Quantum Optimization, or BF-DCQO. Unlike many quantum algorithms, BF-DCQO does not rely on iterative parameter tuning — or, repeatedly adjusting settings to improve the result. Instead, it derives circuit parameters analytically, making it more practical for current noisy hardware.

Finally, the results are refined using classical methods. Because the quantum circuits do not enforce the constraint on the number of selected assets, a post-processing step adjusts the solutions to meet the required portfolio size and improves them through local search techniques.

The hybrid structure reflects the current state of quantum computing, where quantum processors handle parts of the problem that benefit from their native physics, while classical systems manage orchestration and refinement.

Hardware Advantages and Tradeoffs

The experiments were conducted on trapped-ion systems, including IonQ’s Forte platform and a 64-qubit barium-based development system. The post highlights a structural advantage of trapped-ion hardware for this type of problem.

In superconducting systems, qubits typically interact only with nearby neighbors, requiring additional operations to simulate full connectivity. These extra steps increase circuit depth and introduce errors. By contrast, trapped-ion systems allow interactions between any pair of qubits through shared vibrational modes, enabling dense problem mappings without additional overhead.

This feature is particularly relevant for financial optimization problems, which often involve dense interaction graphs where many variables are interdependent.

The study also examined circuit simplification techniques, known as pruning, which remove operations that contribute little to the final result. The findings suggest that substantial reductions in circuit complexity –removing up to 60% of gates in some cases — had minimal impact on solution quality, indicating a path to more efficient execution on near-term devices.

The benchmark compared quantum-derived portfolios against those generated by Gurobi, a widely used classical optimization solver.

While the quantum approach does not yet outperform classical methods outright, the results show that quantum-initialized solutions consistently outperform random starting points when followed by the same classical refinement process. This indicates that quantum hardware can provide a meaningful advantage as a component within a hybrid workflow.

The post also notes that some solutions that appeared suboptimal at the subproblem level improved significantly after global recombination and refinement. This suggests that the hybrid pipeline can recover interactions that are not visible during isolated cluster optimization.

Importantly, the method produces not just a single solution but a range of candidate portfolios spanning different risk-return tradeoffs. This diversity can be valuable for practitioners seeking flexibility rather than a single optimal point.

Toward Practical Applications

The implications extend beyond the specific test case because many financial tasks — including index tracking, portfolio rebalancing, and regulatory capital allocation — can be expressed in the same mathematical form. In each case, the quality of the solution depends on how much of the problem can be handled directly by quantum hardware.

The researchers report that as qubit counts increase, the need for decomposition will diminish, reducing approximation errors and improving outcomes without requiring new algorithms. This creates a clear path for incremental gains tied to hardware development.

The workflow, which is inherently parallel, allows Independent subproblems to be distributed across multiple quantum processors, a feature that could become increasingly important as cloud-based quantum infrastructure matures.

Next Steps

The post suggest that the most direct path forward is scaling hardware. The 64-qubit system used in the study is described as similar to upcoming commercial systems, and further increases in qubit count are expected to reduce cluster fragmentation and improve solution fidelity. Additional work is underway to better incorporate cross-cluster relationships during recombination, potentially reducing reliance on classical post-processing.

The broader significance lies in the demonstration itself. Running a 250-asset portfolio optimization problem — at a scale familiar to financial practitioners 00 on quantum hardware and benchmarking it against a leading classical solver places quantum computing within a practical, rather than purely experimental, context.

The results of this study also suggest that quantum advantage in this domain may not arrive as a sudden leap but as a steady progression tied to hardware improvements. As qubit counts rise and error rates fall, the gap between approximate and optimal solutions could narrow, opening new possibilities for problems that remain out of reach for classical methods today.

For a deeper, more technical dive, please review the paper on arXiv. It’s important to note that arXiv is a pre-print server, which allows researchers to receive quick feedback on their work. However, it is not — nor is this article, itself — official peer-review publications. Peer-review is an important step in the scientific process to verify results.